Artificial Intelligence vs. Human Advocacy: The Future of Proving Medical Negligence

Artificial intelligence is rapidly reshaping the legal profession. From contract review to predictive analytics, algorithms now assist lawyers in ways that were unimaginable just a decade ago. In the field of medical negligence and nursing home abuse litigation, AI is emerging as a powerful tool, capable of processing vast amounts of clinical data at unprecedented speed.

However, as technology advances, a fundamental question remains unresolved: Can artificial intelligence replace human judgment in proving medical negligence, or does justice in elder care litigation still require human advocacy?

This article explores how AI is being used to analyze electronic medical records (EMRs), the ethical challenges raised by predictive algorithms, and why human judgment remains indispensable in high-stakes negligence cases involving vulnerable populations.

Medical Negligence in the Digital Age

Medical negligence is a tort rooted in duty of care, breach, causation, and damages. In nursing home cases, these principles are applied within a highly regulated healthcare environment where documentation is extensive, technical, and often overwhelming. Modern nursing homes generate thousands of pages of data for a single resident, including:

- Electronic medical records (EMRs)

- Medication administration logs

- Incident and fall reports

- Staffing schedules

- Progress notes from nurses and physicians

Traditionally, reviewing this information required countless hours of manual analysis. Today, artificial intelligence offers a new approach.

Using AI to Analyze Electronic Medical Records

How AI Identifies Inconsistencies

AI-powered tools can analyze thousands of pages of EMRs in minutes. These systems use pattern recognition and natural language processing to identify:

- Gaps in documentation

- Contradictory entries between providers

- Delayed charting

- Missing assessments after reported incidents

- Medication errors or omissions

For example, an algorithm might flag that a resident was documented as “ambulatory” shortly before a fall, despite prior notes indicating limited mobility and a known fall risk. These inconsistencies are often critical in proving a breach of duty of care.

Why This Matters in Nursing Home Abuse Cases

In nursing home litigation, facilities frequently argue that injuries were unavoidable due to age, frailty, or preexisting conditions. AI-assisted review helps attorneys cut through this narrative by exposing systemic documentation failures that suggest neglect rather than inevitability. From an evidentiary standpoint, AI enhances efficiency, but it does not independently establish liability.

Predictive Algorithms and “Preventable Falls”

Can Algorithms Predict Negligence?

One of the most controversial developments in legal tech is the use of predictive algorithms to assess whether injuries (particularly falls) were preventable. These systems analyze variables such as:

- Prior fall history

- Medication profiles

- Cognitive impairment

- Staffing ratios

- Time of day and supervision levels

Based on this data, AI may assign a probability that a fall was preventable.

Ethical and Legal Concerns

While predictive analytics can assist case evaluation, they raise serious ethical questions:

- Opacity: Many algorithms operate as “black boxes”, making it difficult to explain how conclusions are reached.

- Bias: If trained on flawed or incomplete data, AI may reinforce systemic underreporting of neglect.

- Overreliance: There is a risk that lawyers or courts may treat algorithmic predictions as objective truth rather than probabilistic tools.

In tort law, liability is not determined by statistical likelihood alone. It is determined by whether a defendant breached a legally recognized duty of care under specific circumstances. Algorithms can inform that analysis, but they cannot replace it.

The Limits of Artificial Intelligence in Elder Care Litigation

Medical Negligence Is Not Merely Data-Driven

Elder care litigation involves deeply human considerations:

- Pain and suffering

- Dignity and autonomy

- Emotional trauma

- Family trust and betrayal

These elements are central to damage assessments but are inherently resistant to quantification. AI can identify anomalies in records, but it cannot:

- Evaluate credibility

- Cross-examine witnesses

- Assess intent or recklessness

- Persuade a jury

Medical negligence cases are ultimately narratives about what should have been done, what went wrong, and who must be held accountable.

Why Human Judgment Remains Essential

Advocacy Requires Moral Reasoning

At its core, negligence law reflects societal judgments about acceptable conduct. Determining whether care was “reasonable” under the circumstances requires moral and contextual reasoning that algorithms cannot perform. For example:

- Was staffing “adequate” given the acuity of residents?

- Did the facility prioritize cost savings over safety?

- Were warning signs ignored due to institutional indifference?

These questions go beyond pattern recognition. Trial lawyers experienced in medical negligence litigation involving Atlanta nursing homes understand that data alone cannot establish liability without human judgment and courtroom advocacy.

Courtrooms Are Human Institutions

Judges and juries do not decide cases based solely on data outputs. They respond to:

- Testimony

- Credibility

- Narrative coherence

- Emotional resonance

Human advocacy translates technical evidence into compelling legal arguments, which is something no algorithm can yet achieve.

Integrating AI with Traditional Trial Advocacy

The Hybrid Model

The future of medical negligence litigation lies not in choosing between AI and human advocacy, but in integrating both. Modern trial lawyers increasingly use AI to:

- Accelerate document review

- Identify evidentiary weaknesses

- Develop more focused discovery strategies

However, the interpretation, presentation, and ethical framing of that evidence remain human tasks.

Bill Holbert: Old-School Advocacy Meets Modern Evidence

This hybrid approach is exemplified by trial lawyers like Bill Holbert of Holbert Law, who combines traditional courtroom skill with cutting-edge evidentiary techniques. As a former defense attorney, Holbert understands how healthcare institutions and insurers structure their defenses, from minimizing documentation to reframing neglect as “unavoidable decline”. That insight allows him to use AI not as a shortcut, but as a strategic tool. By pairing advanced record analysis with:

- Rigorous expert testimony

- Cross-examination grounded in real-world care standards

- Clear explanations of duty of care and breach

Holbert demonstrates how technology can strengthen human advocacy rather than replace it.

Implications for Law Students and Legal Education

Bridging Theory and Practice

For law students studying torts, medical negligence, or legal ethics, AI-assisted litigation offers a powerful case study in applied theory. Key takeaways include:

- Duty of care remains a normative concept, not a computational one.

- Liability depends on legal standards, not predictive probabilities.

- Ethical lawyering requires understanding both technological capabilities and limitations.

Understanding how AI is used in real-world elder care litigation helps students move beyond abstraction and see how doctrine functions under pressure.

The Future of Proving Medical Negligence

Artificial intelligence will continue to reshape how evidence is gathered and analyzed. In medical negligence and nursing home abuse cases, it offers unprecedented efficiency and insight. However, justice in elder care litigation cannot be automated.

The future belongs to lawyers who understand technology without surrendering judgment, who can analyze data while still telling human stories of harm, responsibility, and accountability. In that balance between innovation and advocacy, the law preserves its most essential function: protecting the vulnerable through human judgment, not machine prediction.

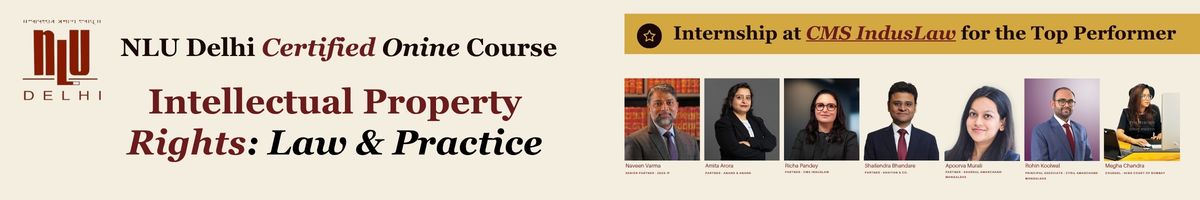

Attention all law students and lawyers!

Are you tired of missing out on internship, job opportunities and law notes?

Well, fear no more! With 2+ lakhs students already on board, you don't want to be left behind. Be a part of the biggest legal community around!

Join our WhatsApp Groups (Click Here) and Telegram Channel (Click Here) and get instant notifications.